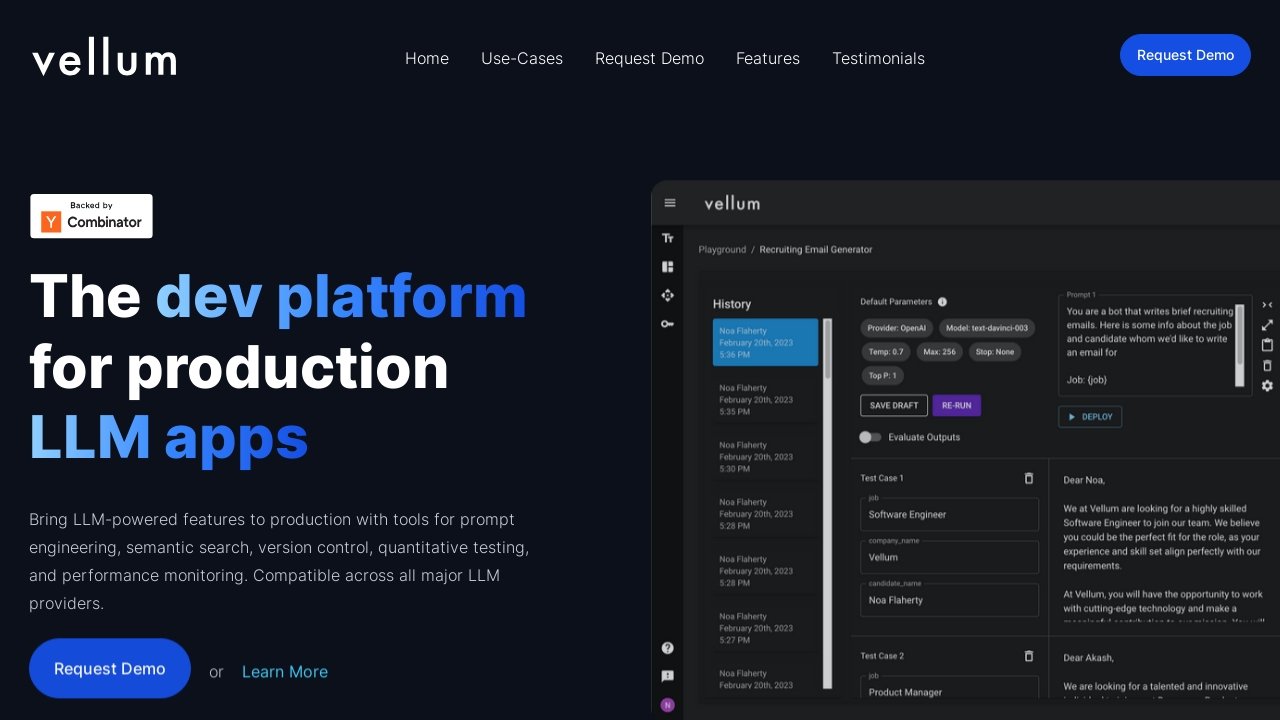

Vellum AI is a comprehensive development platform for creating and deploying applications powered by large language models (LLMs). It offers a range of tools for prompt engineering, semantic search, version control, quantitative testing, and performance monitoring. Here’s a detailed description of what Vellum AI offers:

Key Features of Vellum AI:

- Compatibility with Major LLM Providers: Vellum AI is designed to work seamlessly across all major LLM providers, offering flexibility and choice in AI model selection.

- Prompt Engineering: The platform includes features for comparing, testing, and collaborating on prompts and models, enhancing the efficiency of AI interactions.

- Semantic Search: Users can utilize proprietary data as context in their LLM calls, enabling more relevant and accurate AI responses.

- Deployment Tools: Vellum AI provides tools for testing, versioning, and monitoring LLM changes in production environments, ensuring smooth deployment and operation.

- Workflow Automation: The platform supports the prototyping, deployment, versioning, and monitoring of complex LLM chains, streamlining the development process.

- Test Suites: Evaluate the quality of LLM outputs at scale with comprehensive testing tools.

- Monitoring and Observability: Monitor LLM features in production and gain insights into what’s working effectively.

- Rapid Experimentation: Facilitates quick experimentation without the need for juggling browser tabs or tracking results in spreadsheets.

- Version Control: Track changes and revert or upgrade prompts and models as needed, with no code changes required.

- Provider Agnostic: Avoid tight coupling with a single LLM provider by using the best model for the task and swapping as needed.

Ideal for:

- Developers and companies building LLM-powered applications.

- Teams seeking a comprehensive toolset for prompt engineering and semantic search.

- Organizations looking for a platform to streamline the deployment and monitoring of AI models.